3 years ago, mainly for nostalgic reasons, I started playing OpenRA, a free and open-source re-implementation of the engine of C&C real-time strategy games (Red Alert, Dune 2000, Tiberian Dawn, ...). OpenRA supports online multiplayer game, but the mod I came for (Dune 2000) wasn't very much played online, so I instead joined the most popular mod crew, Red Alert. Quickly enough, I got schooled by veterans, went into competitive plays, and ended up participating in the Red Alert Global League (RAGL, the biggest seasonal tournament) with... mixed results.

But this blog post is not about my pathetic pro gamer career.

While there are highly skilled players in the community and probably enough players, training has always been a source of struggle and frustration for me, and the main reason for that is the lack of a ranking system for players. This means each game is a flip coin with regards to your opponent level, making hard to evaluate our own level, and thus progression. That's why I decided to give a try at building a competitive ladder.

Initial attempt

Game server instances are independent: anyone can setup one, which will be advertised on the master server so that any player can see it, join, and wait in the lobby for one or more player before starting a game.

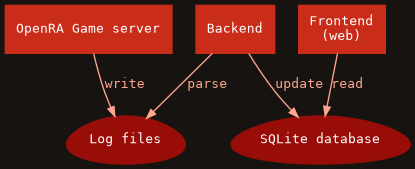

With that in mind, I innocently thought that I could intercept the game results from the server side (typically parsing the logs, maybe with some adjustments), build a database of the outcomes, and setup a dumb web frontend on top. "A small week-end of work" I said to myself. How naive I was...

It was no surprise no one did it before. Thing is, while the server does see who is playing against who, it doesn't actually know the outcome of the game itself because it acts as a simple game packets router between players, with no understanding of the game itself. A game server is actually relatively easy to implement, and alternative implementations already exist.

A complex solution would involve emulating the whole game server side to figure out the outcome. This was considered way too complex and very likely not efficient at all.

Instead, the selected solution was to adjust the protocol and make the clients transmit the current win/loss state to the server. A synchronization mechanism based on hashing the game state was already in-place (used for bugs or identifying malicious clients), which was extended to include a bit field representing the wins/losses of the players at a point in time (team plays are supported, up to 64 players).

Refining the idea

The initial draft to change the protocol didn't take long, and so I started using that new information to make logs server side, and implement in parallel the initial idea I had. Unfortunately, I had to wait for the PR to be merged so that all clients would actually start sending the missing information. This single change being in a release was blocking everything else. As soon as it was upstreamed, I could just run my own server implementation with all the requirements for the backend and web-frontend.

It was basically starting to look like this:

The initial PR implementing this was actually fairly simple, so I thought it would not take long before it gets upstreamed. Unfortunately for me, instead of being merged, the PR started never-ending discussions about server and protocol design, and revealed disagreements between the developers on the following course of actions. For months, I was asked several times to make changes in opposite directions...

Spoiler alert: it took 10 months for the PR to be merged.

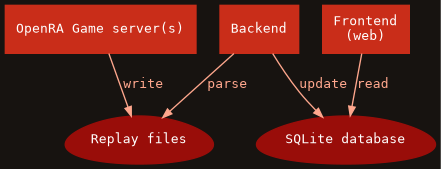

Recording replays

With the PR going nowhere, I started going further in the implementation to demonstrate the whole system. First of all, instead of providing logs, we could actually record the whole game replays (a network recording of the whole game that can be played back in the game client). That's typically done client side, and they contain the game outcome. With the server now obtaining the missing bits of information, it opened the possibility to actually have these replays recorded server side as well (game servers see all the traffic since they just re-route it between players) with the proper header (actually a footer) containing all the important game information.

With the replay recording feature, we could have the backend use them instead of parsing the server logs. This has several benefits:

- The replays being available server side means we can address and verify the games in case an incident is reported (cheating, various abuse, honest mistake, ...)

- Removing the file removes the entry automatically (no need to alter logs or database)

- Synchronization is all about files instead of merging log files or databases, so it opens up the possibility to have multiple server instances transparently

- We don't have to define an intermediate format for transporting the information, the replay already exists for that

- The replay contains all the information we can imagine using on the front-end (let's say if we want to expose the most played factions by a player, stats on the map played, average game duration of a player, etc.)

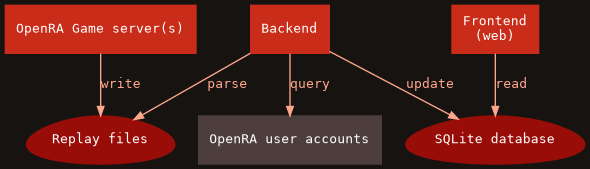

OpenRA accounts

One of the greatest thing that happened to OpenRA recently was the OpenRA account service, which is basically a centralized authentication system. This is actually an essential feature for a ladder and I'm glad I didn't have to implement it. If the game has 2 authenticated players, the backend can just query the OpenRA account service to get the information on those players.

The ladder will only accept games with authenticated players so that we can actually trace players properly.

Disconnect event

The last issue to address was the player disconnection. If a player disconnects, the game doesn't automatically associates it with a surrender event, and thus the game state remains undefined. The reason behind this is partly related to players expectations during multi player games (obscure story about keeping dead player buildings present). I won't enter into the details but it seemed to be kind of taboo to try to address the core issue.

The ladder needed an answer to that to prevent a losing player from

disconnecting to avoid registering a loss. The initial attempt at addressing

this was to basically demux (split into packets) the replays, look for

Disconnect events, and correlates them with the associated clients. This was

kind of painful and required a lot of complex logic in the backend.

Fortunately, we happened to find an agreement on how to simplify the handling for the ladder: the disconnect frame index was simply added to the game summary in the footer. That way, if the game state was undefined at the end of the match, we were now able to derive it from the disconnect frame indices (smallest disconnect frame identify the player who disconnected first). Not ideal, but definitely an order of magnitude better than decoding the whole replay.

Technical summary

The backend ended up being a small Python project, using the TrueSkill™ algorithm for ranking, called to process replay files on a regular basis using a cronjob. Similarly, I didn't try to make something crazy complex for the web so I made a simple Flask project. After all, this is just a dumb non interactive SQLite reader delivering for the web. I started with the backend generating static pages, but this wouldn't actually scale well with the increasing number of players and match details.

The whole thing has been released as Free Software (GPL3) on Github: OpenRA ladder. The complete setup is extensively described in the README if you're interested in more detail.

Launch!

7 months after the previous release (20200503), OpenRA finally released a new

playtest ("beta"): 20201213. Ignoring the experimental devtests ("alpha"),

this was the first version to include the long awaited protocol change allowing

servers to be informed of the win/defeat state, finally unlocking the ability

to deploy the ladder.

A few weeks before the playtest, I paid for some hosting, bought the oraladder.net domain, and deployed the frontend as a demo using the replays from the most recent and popular global tournament: RAGL Season 9. Around the first devtests, the infrastructure was activated. A few iterations on the devtests were necessary, and thanks to a few players and streamers, some issues were uncovered (mostly in game) before the playtest.

The launch of the ladder received an overall unanimously positive reception and I'm glad it's finally up and running. One thing I didn't expect though was how much players were obsessed with the ranking algorithm itself. Days were spent discussing and comparing the different algorithm, which would be best, etc. I ended up adding a primitive ELO mode in the backend, and made sure adding more would be trivial. TrueSkill™ being kind in a gray zone legally speaking, we might need to switch to something else. Maybe Glicko?

Going further

Scaling

Game servers are handled by different entities, so a trusted network need to be setup to reduce the bus factor: basically, I need to add different sources of replays from other server admins I can trust.

This could also mean replays from different mods, but that will require adjustments on the backend and frontend. While both are mod agnostic, they currently work for one mod at a time.

Ideally, I would hand the whole infrastructure to the OpenRA organization, but the workload is probably too high for them currently. This may change in the future if the ladder is really successful. If that's the case, it could also simplify the communication between the ladder backend and the official OpenRA user accounts service (which is admittedly clumsy today because I have to build my own desynchronized subset of the account database by HTTP queries on the service).

RAGL infrastructure

Adjusting the ladder for independent tournaments (and in particular RAGL) is currently a work in progress on top of my TODO list. Organizers struggle with the logistics and still need lot of manual intervention. The ladder infrastructure will serve as a reference for the automation of RAGL.

Nakama

During the development, the Nakama project was mentioned. This could in the mid to long term replace the frontend, and part of the backend. I'm fairly certain it can do everything the current one is doing, and more. That said, the complex part of the ladder is definitely not the frontend. But it may open ways for the next item.

Matchmaking

For anyone familiar with competitive gaming, or simply video games in general, it might have struck to you that a core feature was missing to make this complete: Matchmaking. Since there is no mechanism or institution to force matchups between players, some players can abuse the system by preferring to play only certain other players. It's too early to say what impact this will have, but a matchmaking system will most certainly be needed in the future.

But it's also important to realize that a matchmaking infrastructure is on an entire new level of dedication to setup than this (which is actually pretty simple) ladder. The reason for that is because it involves modifications at every levels of the stack:

- The game client needs a dedicated interface

- The game servers, the ladder, and the matchmaking service must be part of a common infrastructure to communicate in real time

- The protocol and servers likely need adjustments as well

While resilient, the decentralized nature of the game server instances is probably going to be one of the main difficulty to address in order to answer that issue.

Tooling

In parallel of all of this, I had to craft some tooling to help me debugging the network/replay code, which I end up releasing a bit before the ladder since some people were interested in it. This is available on the ora-tools repository. If you're interested in the code, beware before you dig into it: the protocol is carrying some historical burden (to put it mildly), and to add insult to injury, I tried to make the code compatible with older versions. So don't be surprised if you see entangled layers of a YAML flavours encapsulated into a noise of binary packets. You've been warned.

Bots

The ora-tools project also contains some partial network code which I initially used to write a bot. It was actually working and able to communicate in-game with the players. I wanted to use it as a key element in the building of the ladder, but in the end it wasn't necessary. I didn't include it in the repository because it wasn't in a very useful state yet, but I could in the future if there is a demand.

That said, I'd love to see a playing bot API similar to what was done with PySC2. If you're into this AI bubble, maybe something similar to Alphastar can be worked out with OpenRA, but that's probably yet another lifetime project. A prerequisite is to have the complete game emulation first, which is going to be tricky to keep in sync with the game engine.

More stats

With all the information contained in the replays, we could actually expose a lot of information on the players. Since everything is public, it might actually be problematic from a competitive point of view: what happens if we're putting into light that this particular player has a >90% loss against a given faction? That can be used against them in competitive events. The ideal solution would probably to make this information only available to the player, but that requires a close integration with the OpenRA authentication service.

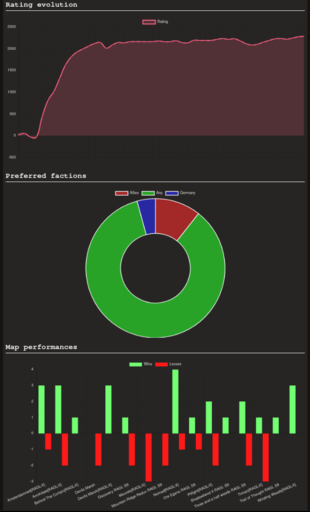

That said, a quick poll on the Competitive Discord server showed that most players weight the benefits of accessing these stats much higher than revealing their weaknesses publicly. So basic global and player stats were implemented.

Now to go further, we would need advanced statistics, such as player build orders. Doing that unfortunately requires complete emulation of the game (again) to be reliable. The ora-tools provide an attempt at this, but a better approach would be to have native tooling into the OpenRA project (so that we don't duplicate the whole engine for emulation).

Conclusion

That was quite a ride. Like so many projects I started these past years, I though I'd never see the end of it. And while I'm pretty satisfied with the result today, I unfortunately won't have time to play the game again with the same dedication I had before. All I'm hoping for is that it will benefit the competitive community and become a cornerstone for the future of OpenRA.

I'd like to finish this post with thanks to the core team of OpenRA which has supported me in this adventure, and in particular pchote who dedicated a lot of his time helping in many area. Also shootout to my fellow competitive comrades who encouraged me into finishing this project. Cheers.