About two years ago, I tried to improve the support of the GIF encoding in FFmpeg to make it at least decent. This notably led to the addition of the transparency mechanism in the GIF encoder. While this is not always optimal depending on your source, it is in the most common cases. Still, this was merely an attempt to prevent shaming the encoder too much.

But recently at Stupeflix, we needed a way to generate high quality GIF for the Legend app, so I decided to work on this again.

All the features presented in this blog post are available in FFmpeg 2.6, and will be used in the next version of Legend app (probably around March 26th).

TL;DR: go to the Usage section to see how to use it.

Initial improvements (2013)

Let's observe the effect of the transparency mechanism introduced in 2013 in the GIF encoder:

% ffmpeg -v warning -ss 45 -t 2 -i big_buck_bunny_1080p_h264.mov -vf scale=300:-1 -gifflags -transdiff -y bbb-notrans.gif

% ffmpeg -v warning -ss 45 -t 2 -i big_buck_bunny_1080p_h264.mov -vf scale=300:-1 -gifflags +transdiff -y bbb-trans.gif

% ls -l bbb-*.gif

-rw-r--r-- 1 ux ux 1.1M Mar 15 22:50 bbb-notrans.gif

-rw-r--r-- 1 ux ux 369K Mar 15 22:50 bbb-trans.gif

This option is enabled by default, so you will only want to disable it in case your image has very much motion or color changes.

The other implemented compression mechanism was the cropping, which is basically a way of allowing to redraw only a sub rectangle of the GIF and keep the surrounding untouched. In case of a movie, it is rarely very useful. I will get back to this later on.

But anyway, since then, I didn't make any progress on it. And while it might not be that obvious in the picture above, there are quite a few shortcomings regarding the quality.

The 256 colors limitation

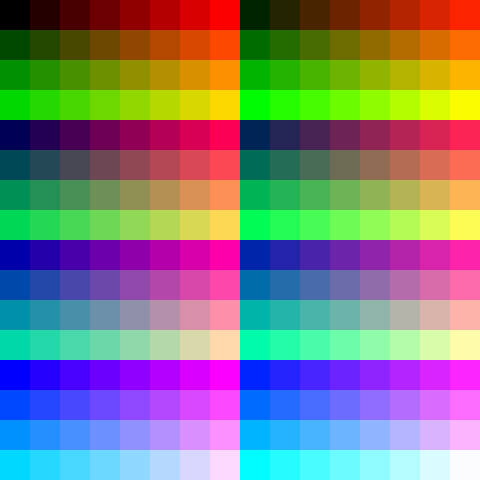

As you probably know, GIF is limited to a palette of 256 colors. And by default, FFmpeg just uses a generic palette that tries to cover the whole color space in order to support the largest variety of content:

Ordered and error diffusing dithering

In order to circumvent this problem, dithering is used. In the

Big Buck Bunny GIF above, the ordered Bayer dithering is applied. It is easily

recognizable by its 8x8 crosshatch pattern. While it's not the prettiest, is

has many benefits such as being predictable, fast, and actually preventing

banding effects and similar visual glitches.

Most of the other dithering methods you will find out there are error based. The principle is that a single color error (the difference between the color picked in the palette and the expected one) will spread all over the image, causing a "swarming effect" between frames, even in areas were the source was completely identical between frames. While this often provides a much better quality, it completely kills the compression of GIF:

% ffmpeg -v warning -ss 45 -t 2 -i big_buck_bunny_1080p_h264.mov -vf scale=300:-1:sws_dither=ed -y bbb-error-diffusal.gif

% ls -l bbb-error-diffusal.gif

-rw-r--r-- 1 ux ux 1.3M Mar 15 23:10 bbb-error-diffusal.gif

Better palettes

The first step to improve GIF quality is to define a better palette. The GIF format stores a global palette, and you can re-define the palette for one picture (or sub-picture; each frame overlay on the previous one, but it can overlay at a specific offset with a smaller size). The per frame palette does replace the global palette only for one frame. As soon as you stop defining a palette, it will fall-back on the global one. This means that you can not define a palette for a range of frames like you would be tempted to do (typically defining a new palette at each scene change).

So said differently, you will have to follow the model of one single global palette, or one palette per frame.

One palette per frame (not implemented)

I originally started with implementing a computation of the palette per frame, but this had the following drawbacks:

- The overhead: a 256 colors palette is 768B and it is not part of the LZW algorithm mechanism so it is not compressed. And since it had to be stored at each frame, this means an overhead of 150 kbits/sec for a 25 FPS footage. It is mostly negligible though.

- My initial test was giving a brightness blinking effect because of these palette changes, which wasn't pretty at all.

These are the two reasons I didn't follow that path and decided to compute a global palette instead. Now that I think back, it might be relevant to retry that approach, because the color quantization is in a way better state than it was in my initial tests.

It would also be possible to store the same palette at each frame for ranges of frames (typically at scene changes like mentioned before). Or better, only for the sub-rectangle that changes.

All of this is left as an exercise for the reader, patch welcome. Feel free to contact me if you are interested in this.

One global palette (implemented)

Having one global palette means a 2-pass mechanism (unless you are willing to store all the video frames in memory).

The first pass is the computation of a palette for the whole presentation. This is where the new palettegen filter comes into play. The filter makes a histogram of all the colors of every frame, and generates a palette out of these.

Some trivia on the technical aspect: the filter is implementing a variant of the algorithm from Paul Heckbert's Color Image Quantization for Frame Buffer Display (1982) paper. Here are the differences (or specificities regarding undefined behavior in the paper) I remember doing:

- It's using a full resolution color histograms. So instead of using a histogram of down-sampled RGB 5:5:5 as key as suggested in the paper, the filter uses a hash table for the 16 million of possible RGB 8:8:8 colors.

- The segmentation of the box is still done at the median point, but the selection of the box to split is done according to the variance of the colors in the box (a box with a larger variance of colors will be preferred for the cut-off).

- This was not defined in the paper as far as I could tell, but the averaging of the colors in the box is done depending on the importance of the color.

- When splitting a box along an axis (red, green, or blue), in case of equality green is preferred over red, which is in turn preferred over blue.

So anyway, this filter does the color quantization, and generate a palette

(generally saved into a PNG file).

It will typically look like this (upscaled):

Color mapping and dithering

The second pass is then handled by the paletteuse filter, which as you guessed from the name will use that palette to generate the final color quantized stream. Its task is to find the most appropriate color in the generated palette to represent the input color. This is also where you will decide which dithering method to use.

And again some trivia on the technical aspect:

- While the original paper proposes only one dithering method, the filter implements 5 of them.

- Just like

palettegen, the color resolution (mapping the 24-bit input color to a palette entry) is done without destruction of the input. It is achieved through an iterative implementation of K-d Tree (with K=3 obviously, one dimension for each of the RGB components) and a cache system.

Using these two filters will allow you to encode GIF like this (single global palette, no dithering):

Usage

Running the 2 passes manually with the same parameters can be a bit annoying to adjust the parameters for each pass, so I recommend to write a simple script such as:

#!/bin/sh

palette="/tmp/palette.png"

filters="fps=15,scale=320:-1:flags=lanczos"

ffmpeg -v warning -i $1 -vf "$filters,palettegen" -y $palette

ffmpeg -v warning -i $1 -i $palette -lavfi "$filters [x]; [x][1:v] paletteuse" -y $2

...which can be used like this:

% ./gifenc.sh video.mkv anim.gif

The filters variable contains here:

- An adjustment of the frames per second (reduced to 15 can make it visually jerky but will make the final GIF smaller)

- A scale using

lanczosscaler instead of the default (bilinearcurrently). It is recommended that you rescale usinglanczosorbicubicas they are far superior tobilinear. Your input will very likely be more blurry if you don't.

Extracting just a sample

It is unlikely that you will encode a complete movie, so you might be tempted

to use -ss and -t (or similar) options to select a segment. If you do that,

be very sure to have both as input options (before the -i). For example:

#!/bin/sh

start_time=12:23

duration=35

palette="/tmp/palette.png"

filters="fps=15,scale=320:-1:flags=lanczos"

ffmpeg -v warning -ss $start_time -t $duration -i $1 -vf "$filters,palettegen" -y $palette

ffmpeg -v warning -ss $start_time -t $duration -i $1 -i $palette -lavfi "$filters [x]; [x][1:v] paletteuse" -y $2

If you don't, it will cause problem at least for the first pass where the output will never have more than one frame (the palette), so that won't do what you want.

One alternative is to pre-extract with stream copy the sample you want to encode, with something like:

% ffmpeg -ss 12:23 -t 35 -i full.mkv -c:v copy -map 0:v -y video.mkv

If the stream copy is not accurate enough, you can then add a trim filter. For example:

filters="trim=start_frame=12:end_frame=431,fps=15,scale=320:-1:flags=lanczos"

Getting the best out of the palette generation

Now we can start looking at the fun part. In the palettegen filter, the main

and probably only tweaking you will want to play with is the stats_mode

option.

This option will basically allow you to specify if you are more interested in

the whole/overall video, or only what's moving. If you use stats_mode=full

(the default), all pixels will be part of the color statistics. If you use

stats_mode=diff, only for the pixel that differs from previous frame will be

accounted.

Note: to add options to a filter, use it like this:

thefilter=opt1=value1:opt2=value2

Following is an example to illustrate how it affects the final output:

The first GIF is using stats_mode=full (the default). The background doesn't

change for the whole presentation, as a result the sky gets a lot of attention

color wise. On the other hand, the moving text ends up with a very limited

subset of colors. As a result, the fade out of the text gets hurt:

On the other hand, the second GIF is using stats_mode=diff, which is favoring

what's moving. And indeed, the fade out of the text is much better, at the cost

of dithering glitches in the sky:

Getting the best out of the color mapping

The paletteuse filter has slightly more options to play with. The most

obvious one is the dithering (dither option). The only predictable dithering

available is bayer, all the others are error diffusion based.

If you do want to use bayer (because you have a high speed or size issue),

you can play with the bayer_scale option to lower or increase its crosshatch

pattern.

Of course, you can also completely disable the dithering by using

dither=none.

Concerning the error diffusal dithering, you will want to play with

floyd_steinberg, sierra2 and sierra2_4a. For more details on these, I'm

redirecting you to DHALF.TXT.

For the lazy, floyd_steinberg is one of the most popular, and sierra2_4a is

a fast/smaller version of sierra2 (and is the default), diffusing through 3

pixels instead of 7. heckbert is the one documented in the paper I mentioned

previously, and is just included as a reference (you probably won't want it).

Here is a small preview of different dithering modes:

original (31.82K) :

dither=bayer:bayer_scale=1 (132.80K):

dither=bayer:bayer_scale=2 (118.80K):

dither=bayer:bayer_scale=3 (103.11K):

dither=floyd_steinberg (101.78K):

dither=sierra2 (89.98K):

dither=sierra2_4a (109.60K):

dither=none (73.10K):

Finally, after playing with the dithering, you might be interested to learn

about the option diff_mode. To quote the documentation:

Only the changing rectangle will be reprocessed. This is similar to GIF cropping/offsetting compression mechanism. This option can be useful for speed if only a part of the image is changing, and has use cases such as limiting the scope of the error diffusal dither to the rectangle that bounds the moving scene (it leads to more deterministic output if the scene doesn't change much, and as a result less moving noise and better GIF compression).

Or said differently: if you want to use error diffusion dithering on your image for the background even though it's static, enable this option to limit the spreading of the error all over the picture. Here is a typical case where it's relevant:

Notice how the dithering on the face of the monkey changes only when both top and bottom texts are moving at the same time (that is, in the very last frames here).